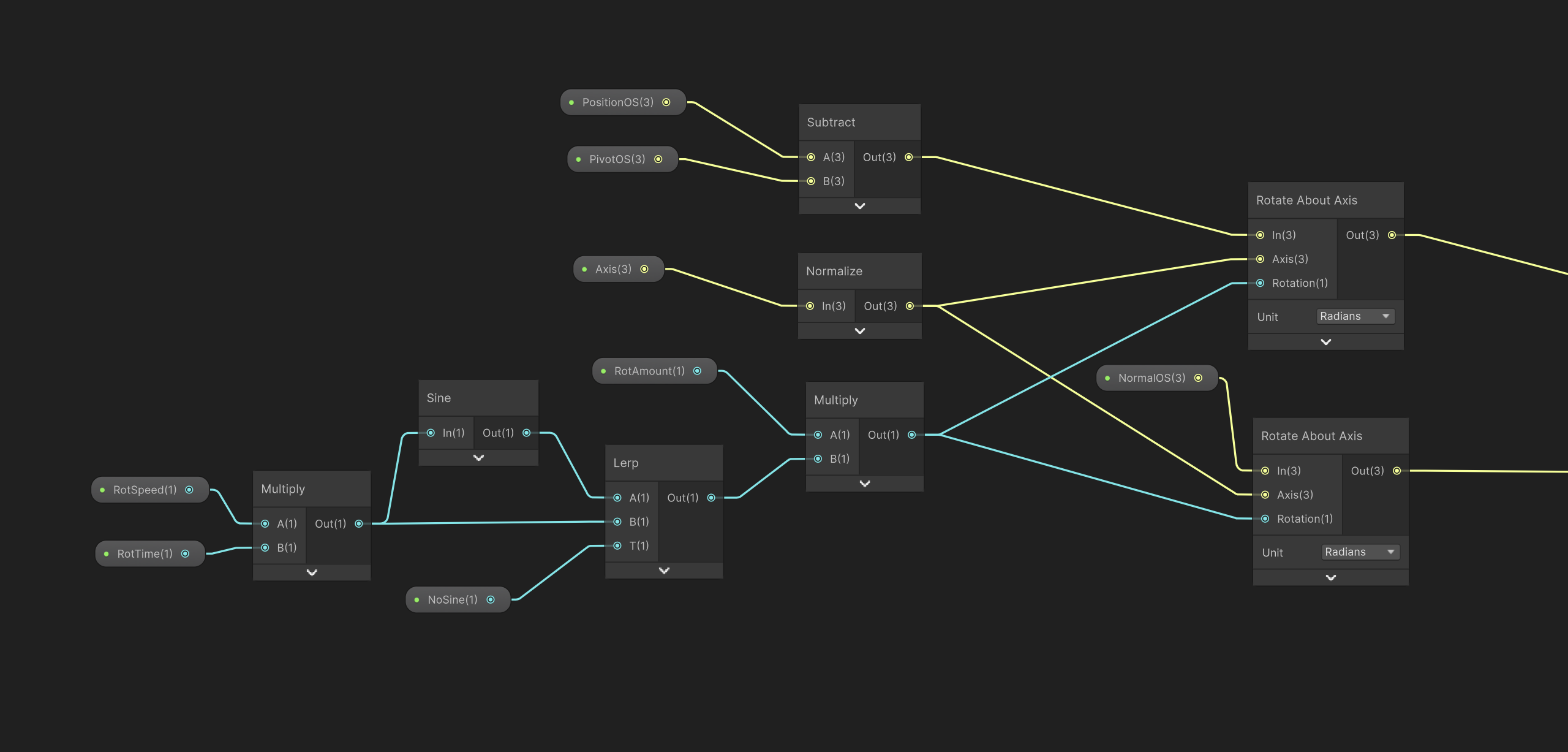

Rotation subgraph

This subgraph is used as the base for several of the robots movements. Instead of using bones or animation clips it rotates parts of the mesh directly in the shader.

In my setup the position input is driven by a vertex color mask. This means that the subgraph does not affect the whole mesh at once but only the specific part selected by that mask.

This made it possible to animate different parts of the robot separately.

The axis is chosen depending on the type of motion needed. By changing the axis i can decide the direction each part should rotate in.

The movement itself is controlled by values such as time, speed, and rotation amount.

These values are combined to create either continuous spinning motion or softer back and forth movement depending on how the subgraph is used.

At the core of the setup is Unitys Rotate About Axis node. This node rotates a position around a chosen pivot point and axis by a given rotation value.

In this case it is used to rotate both the vertex position and the normal so the mesh deforms and shades correctly as it moves.

Each of these rotation steps is then combined at the end. The output position from one subgraph can be passed into the next one and then into another.

Allowing several motion layers to build on top of each other. This made it possible to create more complex animation by stacking multiple simple rotation setups together.